Did you know that AI has a favorite number?

Let's do a quick test. Open ChatGPT, Claud, Gemini, or whatever AI you use, and type in this prompt: “Choose a random number between 1 and 25.” Let me guess. Did it pick 17? Before we go any further, post your result in the comments! You'll see how quickly the same numbers repeat themselves.

Strange, isn't it? After all, it's “artificial intelligence,” powerful servers, millions of operations per second... and it behaves exactly like you do during simple psychological tests.

Let's do a quick experiment. Don't think about it, answer in a split second: think of any tool and any color!

You probably thought of a red hammer, right? This is a classic example of how your brain—though powerful—loves shortcuts and familiar patterns. When we think of color, we often choose red. When we think of a tool, a hammer is the first thing that comes to mind. AI does exactly the same thing, only on a gigantic scale.

The computer does not roll the dice, even if it says it does

We tend to think that when we ask a computer to do something random, it has some kind of digital dice inside. Nothing could be further from the truth. A computer is a mathematical machine. It doesn't “think” – it calculates.

What we call randomness in computer science is actually a pseudorandom number generator (PRNG), i.e., a certain algorithm. The most popular ones, such as the Linear Congruential Generator (LCG), are based on specific calculations that start with a so-called seed.

Have you ever wondered where the machine gets its first impulse—the seed? Since it cannot “think” for itself, it usually looks to its internal system clock. It retrieves the current time with millisecond precision—let's say it is a string of digits: 1715432901234. This specific moment becomes your starting number X0.

Only now does mathematics come into play: the algorithm takes your seed, multiplies it by a large constant number, adds another number, and finally divides the whole and extracts the remainder from the division (modulo operation). The whole process follows a strict pattern:

Let's see how this works in practice using a simple example. Let's assume that our algorithm has the following parameters: a=5, c=3, and m=8. We choose the number 1 as your “starting point” (seed).

- Step 1: (5 x 1 + 3) mod 8 = 0

- Step 2: (5 x 0 + 3) mod 8 = 3

- Step 3: (5 x 3 + 3) mod 8 = 2

Your “random” sequence is: 0, 3, 2... Although these numbers may seem random to an outsider, if I know the pattern and the starting number (your seed), the entire “randomness” is 100% predictable and repeatable to me. A hacker doesn't have to guess—they simply perform the same multiplication as your computer.

Predictability is a death sentence for security

Why is this mathematical repeatability so dangerous? Because all modern network security is based on the assumption that hackers do not know what will happen next. If randomness fails, the entire digital fortress collapses like a house of cards.

Here's what happens when the system “draws” poorly:

- Session hijacking: When you log in to your bank, the system assigns you a “random” session ID. If a hacker knows the algorithm and the time of your login, they can calculate this number and access your account without entering your password.

- Password attacks: “Forgot password” features send you a unique token in a link. If this token is predictable, a criminal can generate it themselves before you even receive the email and set their own password for your profile.

How to do it better? Predictability versus... the Lava Lamp wall

If pure mathematics is too predictable, how do the systems on which your safety depends deal with this problem? The solution is ingenious: they look for chaos in the physical world that cannot be captured in any equation.

Instead of relying on the system clock, professional companies use hardware random number generators (TRNG - True Random Number Generator). They collect data from naturally unpredictable processes: thermal noise of electrons, quantum phenomena, or the movement of bubbles.

The most famous example? Cloudflare, a company that secures a huge part of the global internet. At their headquarters in San Francisco, they have a wall filled with hundreds of lava lamps. A special camera records their constant, chaotic movement, and a computer analyzes every pixel of the image, converting it into data. Because the wax in the lamps never settles in the same way twice, the system receives a source of perfect, unpredictable randomness.

But that's not all. Cloudflare takes “physical chaos” very seriously, and each of their headquarters uses a different source of entropy:

- In their Lisbon office (European headquarters), they built an “entropy wall” consisting of 50 machines generating waves in constant motion.

- In Austin, they use hanging structures (mobiles) in rainbow colors that react to the slightest movements of air in the building.

- In London, on the other hand, a system of double pendulums has been installed, whose movement is extremely sensitive to changing lighting conditions and vibrations.

It is these lava lamps and other mechanisms that protect your connection when you log into your bank. And that brings us to the problem with language models, which lava lamps unfortunately do not have...

Why do LLMs “randomize” 17?

Language models (LLMs) such as ChatGPT are not calculators. They are gigantic systems that predict “what will happen next.” They learned from everything that people wrote on the internet. And that's where the problem lies: we humans are terrible at being random, and AI simply takes over our cognitive errors.

Psychological studies have confirmed the phenomenon of the number 17 for years. The MIT Jargon File even refers to it as “the least random number.” Why? Because when I ask you to choose a number between 1 and 20 or 25, your brain activates a set of heuristics:

- You avoid extremes: 1 and 20 seem too obvious.

- You avoid “round” numbers: 10 is the middle, so you reject it. 5 and 15 are multiples of five, so they seem to have structure.

- You are looking for “oddities”: You choose odd and prime numbers that do not form any simple pattern. 17 sits perfectly in the blind spot of your intuition.

This is confirmed by hard experimental data:

- ScienceBlogs (2007): In a study of 347 people, as many as 18% chose 17 (the expected result with true randomness is 5%).

- Reddit r/SampleSize: In a poll, 17 received 20% of the votes as “the most random,” while the number 5 received only 2%.

- John Conway's experiments: The legendary mathematician repeatedly demonstrated to his students that 17 dominates their choices.

This phenomenon stems directly from the architecture of Large Language Models. It is important to understand that a language model does not have a built-in random generator in the traditional sense. It functions as a statistical engine that generates successive “tokens” (text fragments) based on the highest probability of occurrence after a given sentence. Since most leading models were trained on similar data sets—huge resources of text from the internet, books, and posts—they all became “saturated” with the same human patterns.

I decided to check it out and conducted a test, asking the most popular models 100 times for a random number. The results in which the legendary “17” appeared are staggering:

- OpenAI (gpt-5.2): 100% - in all 100 trials, the model indicated exactly 17. A statistical wall that cannot be broken through.

- Claude (claude-sonnet-4-5-20250929): 99% - only once did he manage to “think” differently.

- Gemini (gemini-flash-latest): 64% - Google's model proved to be the most “rebellious,” although this result is still vastly different from true randomness (which should be 4-5%).

An LLM is not a calculator—why are numbers like words to it?

“Well, that's cool, AI likes seventeen, but what does that tell me about the tool itself?” you might ask.

This says something fundamental: LLM doesn't count, it predicts text. For a language model, the number “17” is not a mathematical value between 16 and 18. It's just another token, the same as “cat,” “house,” or “safety.” When you ask it to “draw,” the model doesn't run complex PRNG algorithms or look into lava lamps. It analyzes conditional probability: “What text character is statistically most likely to occur in human culture after a request for a random number?”

The answer is seventeen.

That's why AI is great at writing emails, summarizing reports, or translating texts, but gets lost when it comes to simple operations on very large numbers or when trying to be “unpredictable.” It doesn't understand the logical structure of mathematics—it understands the statistical structure of language.

So if you need creative text, AI is brilliant. But if you're looking for true randomness or precise calculations, trust proven, classic mathematical tools. Because in the world of AI, randomness is just another linguistic convention in which you and your biases are the main architects.

Now admit it—what did your AI draw?

View related articles

Awarie IT zdarzają się każdemu

Od paru godzin trwa awaria komunikatora internetowego Slack. Kilka tygodni temu nie można było korzystać z usług firmy Google, a jeszcze wcześniej spora część Internetu nie działała z powodu awarii usług Cloudflare. Czy to możliwe, że usługi w chmurze są niedostępne?

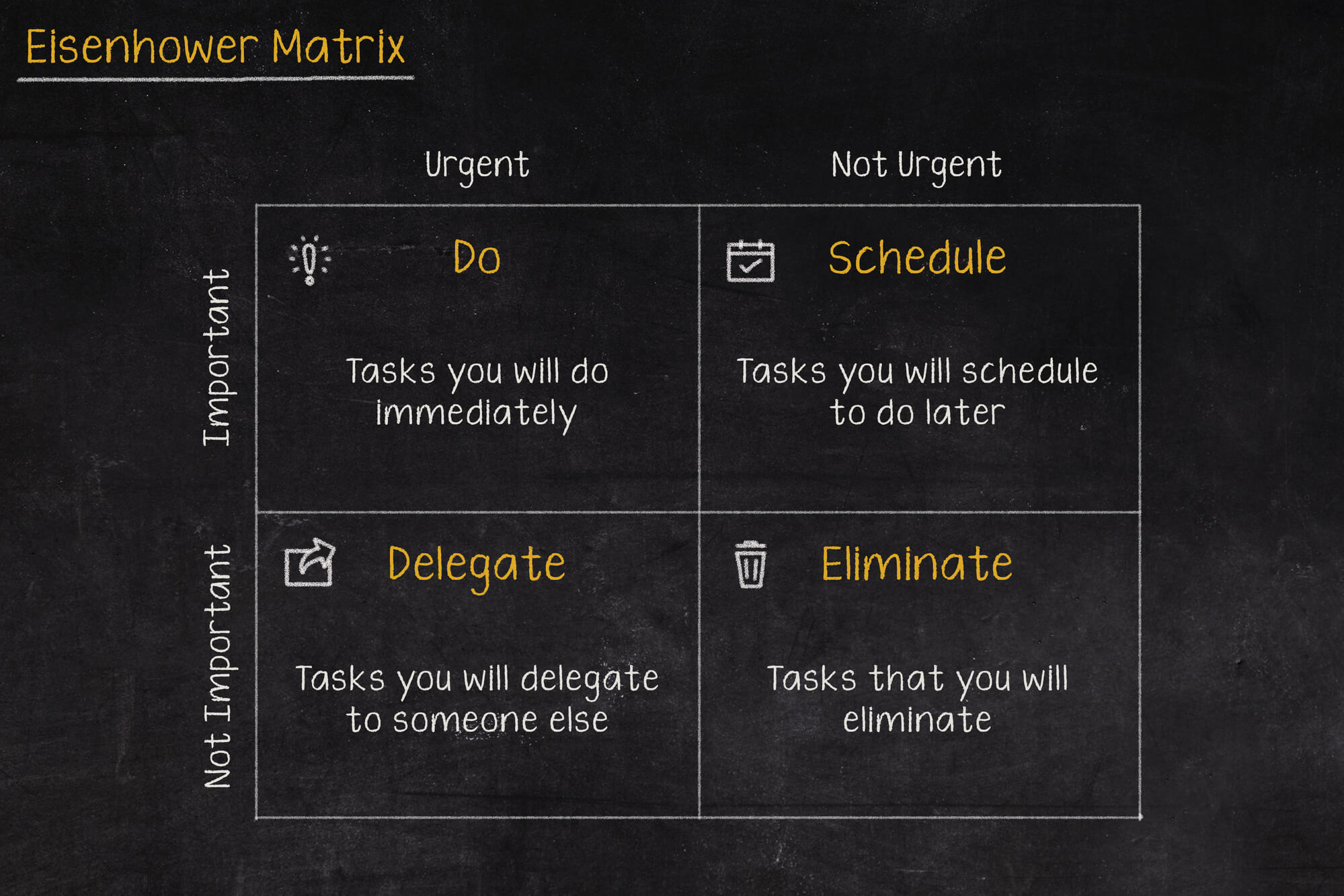

Macierz Eisenhovera, czyli jak zapanować nad priorytetami?

Iść na przerwę a może odpisać na tego maila, czy odebrać telefon od przełożonego? W jakiej kolejności zająć się tymi zadaniami, aby nie utracić nad tym kontroli i nie popaść w bezsilność? Rozwiązaniem tych problemów może być Macierz Eisenhowera (nazywana także Matrycą lub Kwadratem Eisenhowera).

Czy Alert RCB powinien informować o wyborach prezydenckich?

Komunikacja w niebezpieczeństwie jest jednym z ważniejszych zagadnień jakie się porusza podczas żeglowania, latania czy nurkowania. Ostrzeżenia potrafią uratować życie, dlatego nie powinny być lekceważone, a tym bardziej nie powinny swoją treścią prowadzić do ich zignorowania.