Is Vibe Coding safe?

The world has gone crazy for creating applications using artificial intelligence. Tools such as Lovable and Cursor are breaking popularity records, and the internet is teeming with courses promising that anyone—even non-technical people—can create a profitable startup by simply entering the right commands (prompts). This phenomenon has been dubbed “vibe coding.” But is it safe to entrust software development to amateurs armed with AI?

Table of Contents

I often say that cybersecurity is much more than just an IT issue. It is a matter of your business survival and protecting your customers' data. And in the world of vibe coding, where “vibe” and speed are what count, security is often forgotten.

It seems to be working

Imagine you are building a house. AI is like a bricklayer-plumber-painter who lays bricks at lightning speed. The walls are up, they're straight, and they're even nicely painted. Looks great, right? The problem is that he forgot about the foundations, and the electrical wiring is connected to the gutters. As long as there's no wind or rain, the house stands. But the first storm will raze it to the ground.

The main problem here is the creator's lack of technical knowledge. Someone who is not a programmer simply does not know what to ask artificial intelligence in the context of security.

“When you wrote the application using the prompt, did you write: remember to secure the forms against XSS, or to prevent SQL injection?”

This is exactly how AI-generated code works for non-technical people. The application “clicks,” the forms work, but under the hood, there are usually no security measures in place, which ultimately results in user data leaks. There are huge holes in the code:

-

No data verification: AI can generate a form that “swallows” everything. This opens the door to SQL injection attacks, where hackers enter a malicious command instead of a name and download your entire database.

-

No encryption: AI often takes the easy way out. Your users' passwords may be stored in plain text. One leak and you'll have prosecutors and GDPR penalties on your hands.

AI hallucinations

Non-technical people treat AI as an oracle. If ChatGPT, Cursor, Bolt.new, or Windsurf provide a ready-made script, we assume it is correct. This is a mistake! AI is not a calculator; it is a statistical engine that sometimes... makes things up.

This phenomenon is called hallucination. AI may suggest using a library (a ready-made code module) that does not actually exist. And here comes the key question: how can you, as a non-technical person, know that this is a trap?

For you, it's just another line of text that you copy into the terminal. If the AI says, “install the super-secure-auth-pro package,” you do it. What's worse, your application may work fine at first because the AI has created a skeleton that “pretends” everything is fine.

This creates an opportunity for an attack called slopsquatting. Hackers research which non-existent library names are most often invented by AI, then create them and upload them to the web with hidden malicious code. The moment you install such a “hallucination” in your project, you give the hacker the keys to your kingdom. They take control, and you don't even notice the error because the “vibe” is right and the program has started. The risk remains the same, whether you're using a simple chat or an advanced agent like Replit Agent.

Personal data, sensitive data

Vibe coding tempts with the vision of “zero costs,” but the actual costs may appear after the first leak. If your application collects data from even one person, you become a personal data administrator. At that point, you enter the world of GDPR and real legal responsibility.

The problem goes beyond AI itself, because we often repeat bad habits from the “analog” world. It is still standard practice in many companies (e.g., insurance companies or apartment landlords) to send photos of ID cards via WhatsApp or Messenger. This is a surefire recipe for disaster.

In the case of vibe coding and automation, this risk increases exponentially. Amateurs create systems that collect extremely sensitive information—credit card numbers, document scans, or medical data—without having a clue about encryption or secure data storage.

- Do you know where your application's servers are physically located?

- Is your database “visible” from anywhere on the internet?

- Did you check that your prompt did not contain API keys (passwords for other services) that are now part of the model's training database?

Remember that hackers are not interested in your “vibe,” but rather easy prey. A database leak from an amateur-made application that stores card numbers without any security measures is the easiest possible scenario for them.

The true costs of Vibe Coding

Contemporary marketing sells vibe coding as the “Holy Grail” of business – a promise that you can set up a startup for pennies without any technical knowledge. In the mentoring programs I participate in, I regularly meet people who are fascinated by these advertisements. They think that for $50 and in two evenings, they can create an app on par with a powerful auction portal.

Their enthusiasm usually fades away when we start talking about the fundamentals: GDPR, database encryption, audits, hundreds of necessary security tests, server infrastructure, server administration, backups... and so on.

It then turns out that AI has only generated a “pretty picture” rather than a safe product that can be shown to the world.

Many entrepreneurs downplay these issues, treating security as an unnecessary cost or “a problem for IT specialists.” They assume that a possible administrative penalty is just a calculated risk of doing business. However, I often say that a fine is the least of your problems. If you don't think about it at the outset, your “cheap” application will quickly become your most expensive mistake, and the savings made on developers will disappear at the first attempt of an attack.

The true cost of ignorance is:

- Financial and legal penalties: High fines from the UODO for non-compliance with the GDPR are a real risk that could shut down a small business in a single day.

- Loss of reputation and trust: Customers will never return to a company that has allowed their data to be leaked. Trust takes years to build and can be lost in a second.

- Business paralysis: After an attack, you have to “clean up,” which can take months. During this time, you are not selling, you are not serving customers, and you are losing market share to your competitors.

- Employee exodus: No one wants to work for a company associated with incompetence and data leaks.

Dealing with cyberattacks should be like preparing for a fire—we need to have fire extinguishers and procedures in place, rather than hoping that the fire will pass us by.

The illusion of easy money

I always strongly criticize courses that promise to “become an AI developer in a week.” In my opinion, this is simply selling dreams. If the knowledge contained in a $200 course really allowed you to earn $60,000 a month, the creators of the courses would use it themselves instead of training others.

Particularly dangerous are the “educators” I often see at conferences on marketing and social media. These are people who have absolutely no technical knowledge themselves, yet teach others how to build complex AI automations.

The sight of a slide on which such an “expert” proudly presents their automation process, while publicly displaying their own API keys to services, is unfortunately a sad norm.

This is a situation in which a person teaching others about “savings” exposes themselves to unlimited costs and data theft. Such pseudo-education leads to the proliferation of errors and the creation of an entire ecosystem of flawed, extremely dangerous digital solutions, which in the hands of unaware marketers become a ticking time bomb.

He encoded and disclosed his invoices

The internet went wild after the revelation of a spectacular programming blunder that exposed the dark side of the trend of creating apps “over the weekend with the help of artificial intelligence.” A screenshot from a social media discussion shows a merciless but factual scoring of security errors made by the author of a certain project.

According to an exposé published by a user named Michał, the app's creator made two cardinal mistakes when publishing his software:

- Private database leak: When creating the application installer, the author forgot to exclude his private SQLite database from it. As a result, anyone who installed and opened the program gained access to the creator's private finances, including his spending history on platforms such as Allegro and PayU.

- The illusion of encryption and public key: Although the project documentation (README) on GitHub suggested that the data was secure, in reality only the token for KSeF (National e-Invoice System) was encrypted. However, the real disaster was that the symmetric key used for this encryption was explicitly written into the source code and published in an open repository. Thus, the author unwittingly gave the public access to his production KSeF token.

Commenters on the discussion did not leave a dry thread on the creator, accurately describing the situation as “self-exploit” and not hiding their amusement at the cryptographic key being posted directly into the public code.

As the chief code reviewer bitterly concluded, this incident is perfect proof that no language models (LLMs) can replace fundamental technical skills and basic security. Projects hastily written by so-called “AI bros” who uncritically trust artificial intelligence can pose a huge threat—primarily to themselves.

Experts also drop in

It might seem that the risks described above only apply to laymen. Unfortunately, the “vibe” is so strong and tempting that it can lull even highly experienced people into a false sense of security. A perfect example is the recent story of Jakub Mrugalski, as described by the editors of Zaufana Trzecia Strona.

Jakub, an infrastructure and security expert, experimented with vibe coding, building solutions by extensively guiding AI with prompts instead of writing code line by line in the traditional way. The result? During these experiments, some of the artifacts (code, configuration) ended up in a place where they were publicly accessible—a phenomenon that could be called “vibe hosting.”

Z3S used this as a case study: if a specialist, playing around with new tools, can accidentally expose sensitive data or internal code to the world, what can an amateur say? The problem is that vibe coding lowers the entry threshold and drastically speeds up prototyping, but it does not eliminate technical problems.

The moral is simple: scanners and bug hunters find such errors without much effort. Vibe coding and vibe hosting are great for building toys or very early prototypes (MVP), but if you are going into production or operating on other people's data, you need to go back to classic hygiene:

- Code review: Someone (or another process) must check what the AI has spit out.

- Asset hygiene: Ensuring that secrets and configuration do not leak into public directories.

- Audits and monitoring: Constantly checking that what we've built “on vibe” isn't an open door for hackers.

At the very end

Artificial intelligence is a powerful tool, but it should be treated as a “co-pilot” rather than an autopilot. It can greatly assist an experienced developer with tedious, repetitive tasks, but it can never replace a system architect who sees the bigger picture.

Education and a change in mentality are crucial for companies and users today. Cybersecurity does not start with expensive firewalls, but with simple habits: verifying links, not clicking on suspicious attachments, and applying the principle of limited trust. As I often say, “It is better to have a policy and not need it than to need it and not have it.”

So before you put your idea into practice, keep a few rules in mind:

- The principle of limited trust: Never copy code mindlessly. If something seems too simple, it has probably overlooked security issues.

- Verify libraries: If AI tells you to install something, type the name of the package into Google or npmjs.com. If it has zero downloads, was created yesterday, or has no documentation, don't touch it!

- Think about data: Never collect data that you cannot secure. If your application requires card numbers or social security numbers, and you don't know how AES-256 encryption works, ask a specialist for help.

- Education first and foremost: Cybersecurity is the new First Aid. You need to know the basics so you don't hurt yourself or others.

Vibe coding is revolutionary, but every revolution has its victims. Don't let your startup be one of them just because “vibe” was more important than safety. Coding with AI is like driving a car—it gives you freedom and speed, but only if you know where the brakes are and you buckle up.

If you are unsure whether your project is secure, do not publish it to the world. Makeshift solutions on the web come back to haunt you faster than you think.

View related articles

Awarie IT zdarzają się każdemu

Od paru godzin trwa awaria komunikatora internetowego Slack. Kilka tygodni temu nie można było korzystać z usług firmy Google, a jeszcze wcześniej spora część Internetu nie działała z powodu awarii usług Cloudflare. Czy to możliwe, że usługi w chmurze są niedostępne?

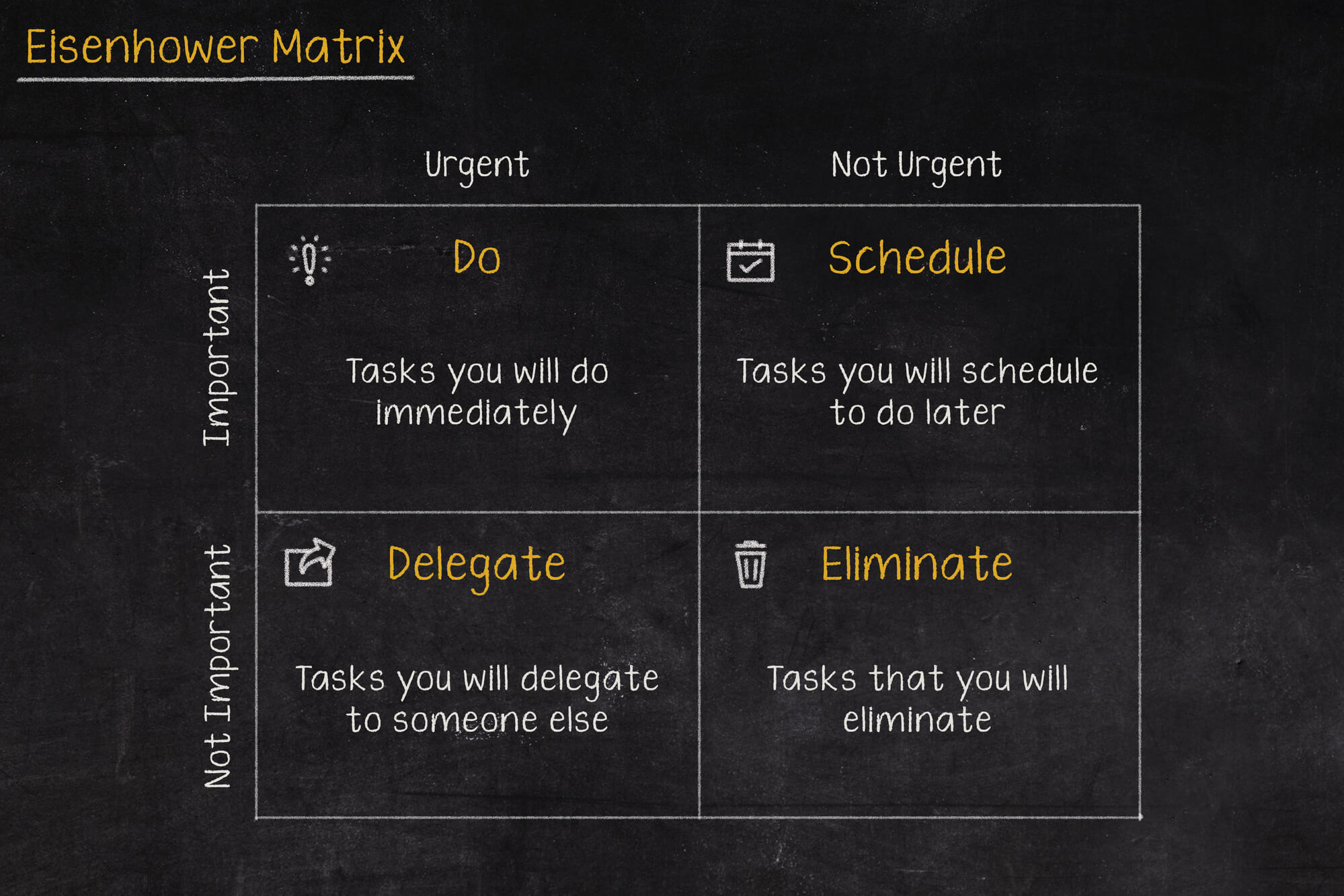

Macierz Eisenhovera, czyli jak zapanować nad priorytetami?

Iść na przerwę a może odpisać na tego maila, czy odebrać telefon od przełożonego? W jakiej kolejności zająć się tymi zadaniami, aby nie utracić nad tym kontroli i nie popaść w bezsilność? Rozwiązaniem tych problemów może być Macierz Eisenhowera (nazywana także Matrycą lub Kwadratem Eisenhowera).

Czy Alert RCB powinien informować o wyborach prezydenckich?

Komunikacja w niebezpieczeństwie jest jednym z ważniejszych zagadnień jakie się porusza podczas żeglowania, latania czy nurkowania. Ostrzeżenia potrafią uratować życie, dlatego nie powinny być lekceważone, a tym bardziej nie powinny swoją treścią prowadzić do ich zignorowania.