Why is AI not suitable for password generation?

Is asking AI to generate a strong password a good idea? In this article, I explain why the token prediction mechanism makes “random” passwords from ChatGPT or Claude predictable for cybercriminals.

Table of Contents

Remember my test with “randomizing” numbers from 1 to 25? If you asked ChatGPT, Claude, or Gemini for any number in that range, there's a good chance you saw 17 in response. This wasn't a coincidence or a bug in the code, but a fundamental feature of current technology that I call “statistical imprisonment.”

AI does not roll dice—it predicts the most likely token based on how people think and choose. The number 17 is commonly perceived by us as the “most random” in this range (because 1 is too simple and 25 is the end of the scale), so the model, in order to sound natural and convincing, simply replicates our human cognitive biases.

If so, let's take it a step further. If AI “randomizes” 17 because that's what human choice statistics tell it to do, what happens when you ask it for a “strong, unique, and random password”?

Short answer: A disaster begins that is not immediately apparent.

The illusion of randomness: G7#xL9@vM2$kP5!q

On the monitor screen, the password generated by the chatbot looks random. It has upper and lower case letters, numbers, and special characters. It meets the strict requirements of any registration form.

The problem is that for the algorithm, this password is not the result of chaos (entropy), but a mathematically calculated continuation. Language models (LLMs) do not generate content out of thin air. Their only task is to take the existing context (your query) and guess what character should come next so that the result is “satisfactory” and statistically consistent with the training data.

When you ask for a “random password,” AI searches its gigantic databases for patterns that people on the internet have described as “looking random.” The model doesn't generate noise—it generates an imitation of noise. It's like asking an actor to play the role of a madman: he doesn't become unpredictable, he just uses techniques that we associate with madness. Asking an LLM for randomness is asking it to deny its own nature, which was built to be predictable, logical, and understandable to humans.

Statistical predictability

Researchers from Irregular Security conducted an experiment. They tested the most popular models for their actual ability to generate unique strings of characters. The results show that AI is tragically repetitive.

All tested language models (LLMs) essentially failed to generate secure passwords. Although at first glance they create strings of characters that appear to comply with security rules (combining upper and lower case letters, numbers, and special characters), in reality these passwords are extremely predictable and vulnerable to cracking.

Here is how individual models and agents based on them performed in terms of password generation:

Claude (Opus 4.6 and earlier versions)

- Strong repeatability: In 50 attempts to generate a password, the model created only 30 unique values, of which the password “G7$kL9#mQ2&xP4!w” appeared as many as 18 times.

- Patterns: Passwords almost always began with a letter (usually “G”), followed by the number “7.” The model favored only selected characters, completely ignoring most of the alphabet, systematically avoided repeating the same character within a password, and did not use the asterisk character (*).

- Very low entropy: Instead of the expected 98 bits of entropy for a 16-character password, the estimated entropy of the generated passwords was only about 27 bits, meaning they could be cracked in a matter of seconds.

- Agents (Claude Code): Their behavior depended on the version and prompt. Opus 4.5 usually generated passwords on its own. The newer Opus 4.6 usually preferred to use secure commands (e.g.,

openssl rand), but it was enough to change the command from “generate password” to “suggest password” for the agent to revert to the unsafe practice of generating passwords directly through the model.

The GPT family of models (GPT-5.2, Codex, ChatGPT Atlas)

- Patterns: In 50 trials (which yielded 135 password suggestions), a high degree of repeatability was observed. Almost all passwords began with the letter “v,” and nearly half of them had the letter “Q” as the second character. Once again, the choice of characters was highly uneven.

- Entropy proven by logprobs: Analysis of the probabilities assigned to tokens by GPT-5.2 showed that a 20-character password, which in theory should offer over 120 bits of entropy, actually only had about 20 bits. This means it could be cracked after about a million attempts.

- Agents: Models such as GPT-5.3-Codex and GPT-5.2-Codex very often deliberately rejected the use of external randomization tools, mistakenly arguing in their reasoning that they themselves were capable of generating “strong and random” passwords. The ChatGPT Atlas agent browser also favored weak passwords generated by itself, e.g., when creating accounts on websites.

The Gemini family of models (Gemini 3 Pro and Flash)

- Warnings (Gemini 3 Pro): This model generated a “security warning” advising against using the suggested password. Unfortunately, the model gave the wrong reason for the problem, claiming that it was due to “server processing” rather than pointing to the actual low cryptographic strength of the password.

- Patterns (Gemini 3 Flash): Almost half of the generated passwords began with “K” or “k,” and the subsequent characters were usually selected from a narrow group: “#,” “P,” or “9.”

- Agents: As with the Claude model, the Gemini-CLI tool (using Gemini 3 Flash) behaved correctly and used system tools when asked to “generate,” but used an unreliable LLM engine when asked to “suggest” a password.

Nano Banana Pro (based on Gemini 3 in “Thinking” mode)

- Dependence on prompt context: When asked to generate a password written “on a sticky note stuck to the monitor,” the model surprisingly strongly preferred to generate simple words consistent with the famous xkcd comic (the so-called “four random words” method), associating such a medium with poor security habits.

- However, when the command was slightly modified to “choose a random password and write it down on a piece of paper,” Nano Banana Pro began generating passwords with typical, erroneous, and uneven patterns characteristic of Gemini models.

What is password entropy?

Entropy is a measure of password strength, expressed in bits. Simply put, it determines how many attempts an attacker would need to make to crack a password using a brute-force method.

- A password with 20 bits of entropy requires approximately 220 (or about a million) attempts, which takes a standard computer only a few seconds.

- In turn, a password with an entropy of 100 bits would require approximately 2100 attempts (a 31-digit number), and it would take trillions of years to crack it.

For a truly random password created from a pool of 70 characters (26 uppercase letters, 26 lowercase letters, 10 digits, and 8 special characters), each individual character should contribute approximately 6.13 bits of entropy.

Entropy of passwords generated by language models (LLM)

At first glance, passwords generated by models appear to be very strong. Popular password strength calculators (e.g., KeePass or zxcvbn), which rely on character statistics and dictionaries, rate 16-character passwords from LLM models at around 100 bits of entropy, predicting that it would take centuries to crack them.

However, in reality, language models do not select characters evenly, but with enormous statistical biases, which researchers have proven using Shannon's entropy formula. They estimated the actual entropy in two ways:

Analysis of character statistics (using Claude Opus 4.6 as an example)

Because the model had a strong tendency to favor specific characters (over 50% of the generated passwords began with the letter “G”), the first character of the password carried only 2.08 bits of entropy, instead of the expected 6.13 bits. Counting this for the entire string, Claude's 16-character password had an estimated entropy of only 27 bits, in contrast to the 98 bits we would expect from a fully random password of the same length.

Analysis of “logprobs” (using GPT-5.2 as an example)

When operating, language models assign a specific probability to each possible token, from which they then “draw” the result. By examining these internal probabilities (logprobs) in the GPT-5.2 model, researchers noticed extreme predictability. Although a 20-character password from GPT-5.2 should theoretically have over 120 bits of entropy, the average entropy was only about 1 bit per character. Worse still, for the 15th character in the password (the number “2”), the model was 99.7% certain that it would generate that particular number. The entropy of that single character was only 0.004 bits. As a result, the entire 20-character password had a real entropy of only 20 bits.

In summary, passwords from LLM models only appear to be secure. Instead of offering 100-120 bits of entropy, they actually have around 20-27 bits, which means they can be cracked in about a million attempts – i.e., in a few seconds on a regular computer.

Why won't “Prompt Engineering” work here?

I sometimes hear: “Kamil, I can write a better prompt! I'll tell it to use unusual ASCII characters and avoid repetition.” I understand the enthusiasm, but this is a mistake. No matter how complicated the command you give, the same statistical engine is still working under the hood.

Imagine asking AI to “stop being itself.” It's impossible. The model learns from our patterns and my, your, our mistakes:

If statistically people put the number “1” at the end of their passwords, AI will replicate this because it has seen it in millions of examples. If special characters such as “!” or “#” appear more often in guides on “strong passwords,” AI will consider them “more appropriate” than rare symbols. The model will always choose the path of greatest probability, which is the exact opposite of secure cryptography, where each character must have the same chance of occurring.

Attempting to “hack” the model to make it more random only shifts the problem. Instead of standard predictability, we get “specific predictability.” Hackers can model this by creating password databases dedicated specifically to specific AI models and specific types of prompts.

Password manager

Instead of asking ChatGPT about security, go back to basics and trust tools that were built solely for that purpose. Password managers (such as Bitwarden, 1Password, or KeePass) do not use neural networks or “vibe.” They use mechanisms called CSPRNG (Cryptographically Secure Pseudo-Random Number Generator).

The difference is fundamental to me:

- True sources of randomness: Password managers draw on physical unpredictability, such as processor noise, micro-movements of your mouse, or the time intervals between keystrokes.

- Maximum entropy: In such a password, each character has exactly the same chance of occurring. There are no “favorite letters” or “logical structures” that artificial intelligence could pick up on.

- No human biases: This slogan does not “look” strong. It simply is strong because it has no connection to human psychology or language statistics.

Summary

I see how many of us are seduced by the convenience of AI. But remember: security is not a “vibe,” it's mathematics. The next time you want to ask a bot to secure your digital life, remember the experiment with the number 17. A password from AI is only strong “by eye” — in the aesthetic category, not the defensive one.

My data and your data are worth more than a convenient but extremely dangerous shortcut.

View related articles

Awarie IT zdarzają się każdemu

Od paru godzin trwa awaria komunikatora internetowego Slack. Kilka tygodni temu nie można było korzystać z usług firmy Google, a jeszcze wcześniej spora część Internetu nie działała z powodu awarii usług Cloudflare. Czy to możliwe, że usługi w chmurze są niedostępne?

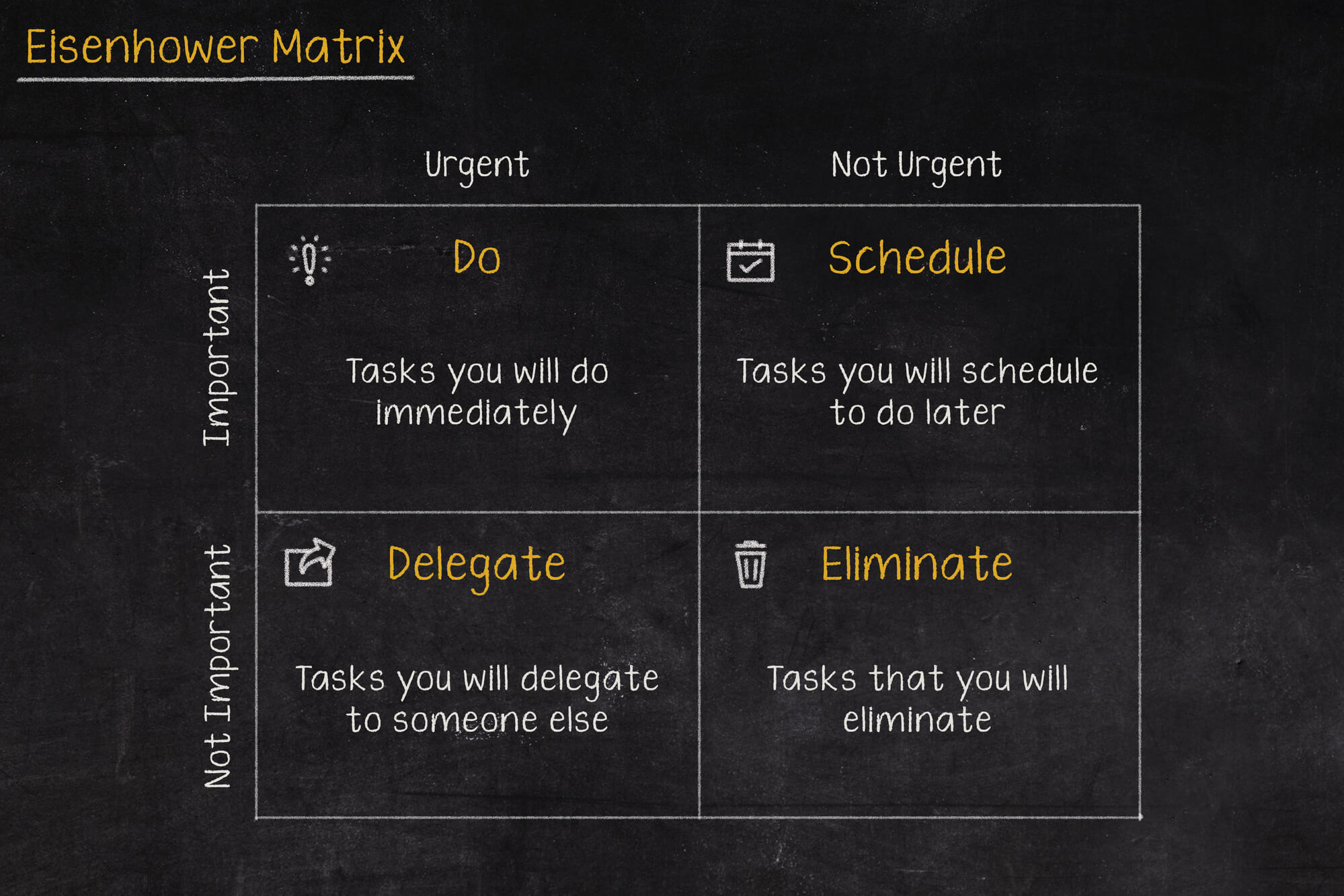

Macierz Eisenhovera, czyli jak zapanować nad priorytetami?

Iść na przerwę a może odpisać na tego maila, czy odebrać telefon od przełożonego? W jakiej kolejności zająć się tymi zadaniami, aby nie utracić nad tym kontroli i nie popaść w bezsilność? Rozwiązaniem tych problemów może być Macierz Eisenhowera (nazywana także Matrycą lub Kwadratem Eisenhowera).

Czy Alert RCB powinien informować o wyborach prezydenckich?

Komunikacja w niebezpieczeństwie jest jednym z ważniejszych zagadnień jakie się porusza podczas żeglowania, latania czy nurkowania. Ostrzeżenia potrafią uratować życie, dlatego nie powinny być lekceważone, a tym bardziej nie powinny swoją treścią prowadzić do ich zignorowania.